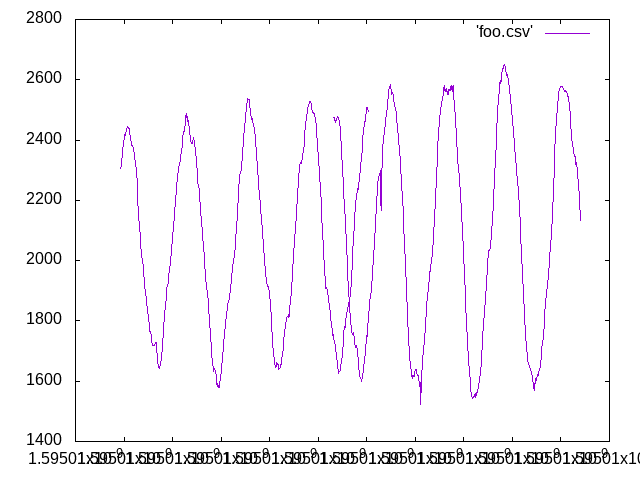

I definitely still have timing problems. I happened to catch a squeak from my office chair (hurrah for squeaky chairs) which spanned two captured buffers. Here’s what I looks like:

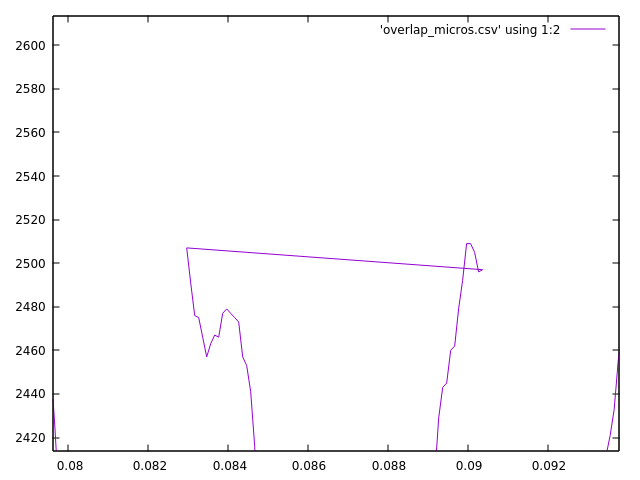

As you can clearly see, there’s a definite backwards time jump. With a little more gnuplot-fu, I managed to get a clearer picture that shows a ~6.5 ms backwards jump.

There are several sources of error that I need to track down for this.

- Assuming the timer interrupt is perfect

- Assuming that time never jumps backward

- Assuming that every reading takes exactly the same amount of time

My first poor assumption is that the timer interrupt fires exactly every 100 microseconds (as it would for a perfect 10kHz signal). However, if it were actually (say) every 110 microseconds (aproximately a 9kHz signal), then over 512 readings (the size of the buffer), it would have a cumulative error of 5120 microseconds, or ~5 milliseconds. A negative 6.5 ms error would indicate am actual time of about 87 microseconds and a clock frequency of about 11.5 kHz. I can correct for this on average by calculating the time it takes to read a whole buffer and dividing by the size of the buffer. I’ll do that next.

My second poor assumption is that my timer never jumps backwards, though as I’ve documented, it does, though I’ve only observed a few 10’s of microsecond jump. That doesn’t mean it doesn’t jump more at other times however, and I need to find a way to model that.

My third poor assumption is that every reading takes the same time, whether that’s 100 micros, 110 micros, or 90 micros. This is the hardest to measure, because my clock just isn’t accurate enough to measure a 10 microsecond skew. I suspect that I’m just going to have to punt on this one and assume the buffer wide average is good enough. It’s probably good enough to account for the drift in clock frequency with temperature.