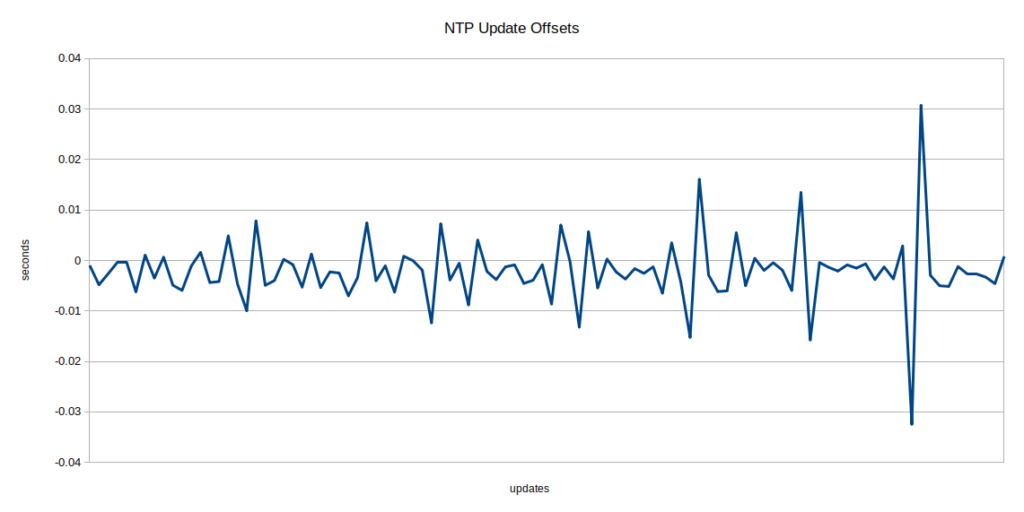

One of the problems I’d been seeing was that every time I synced with the NTP server, the clock would jump forward or back anywhere from 15 milliseconds to 100 milliseconds or more. I even had some cases where readings were appearing out of order because they happened right after one of these jumps.

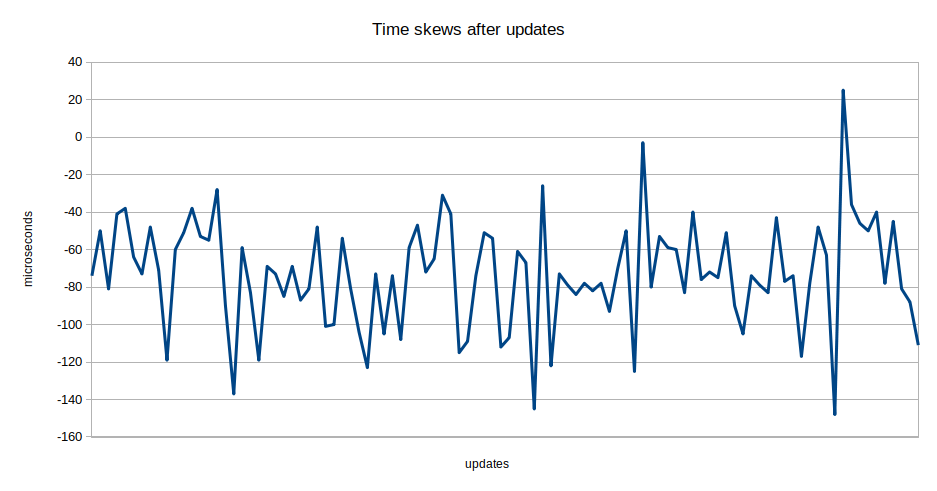

With a bit more hairy math, I’ve got it spreading out the corrections over a full second, and trying to adaptively adjust its mapping of the internal clock to the real world by continually skewing its reading of the micros() call slightly. It’s still jumping slightly, (and backwards, which shouldn’t be possible now, so I likely have some subtle bug), but the jumps are fairly consistently -70 to -85 microseconds, which is shorter than the reading of a single data point, so I doubt it will cause much trouble.

That’s not to say that everything is perfect. The offsets I’m measuring, while smaller, are still large (+/-1000-2000 microseconds), but at least they’re not causing the reading to jump around as much. This might be due to clock instability on the server side, or on the microcontroller side. If it’s on the microcontroller side then my hopes of making the individual mic controllers stay in sync might be in trouble. I’ll have to wait ’til I have more than one fully functional to check out this theory.